Free iPhone and Apple Watch upgrades deliver great new features

Apple's incoming accessibility features are going to make the iPhone and Apple Watch even more useful

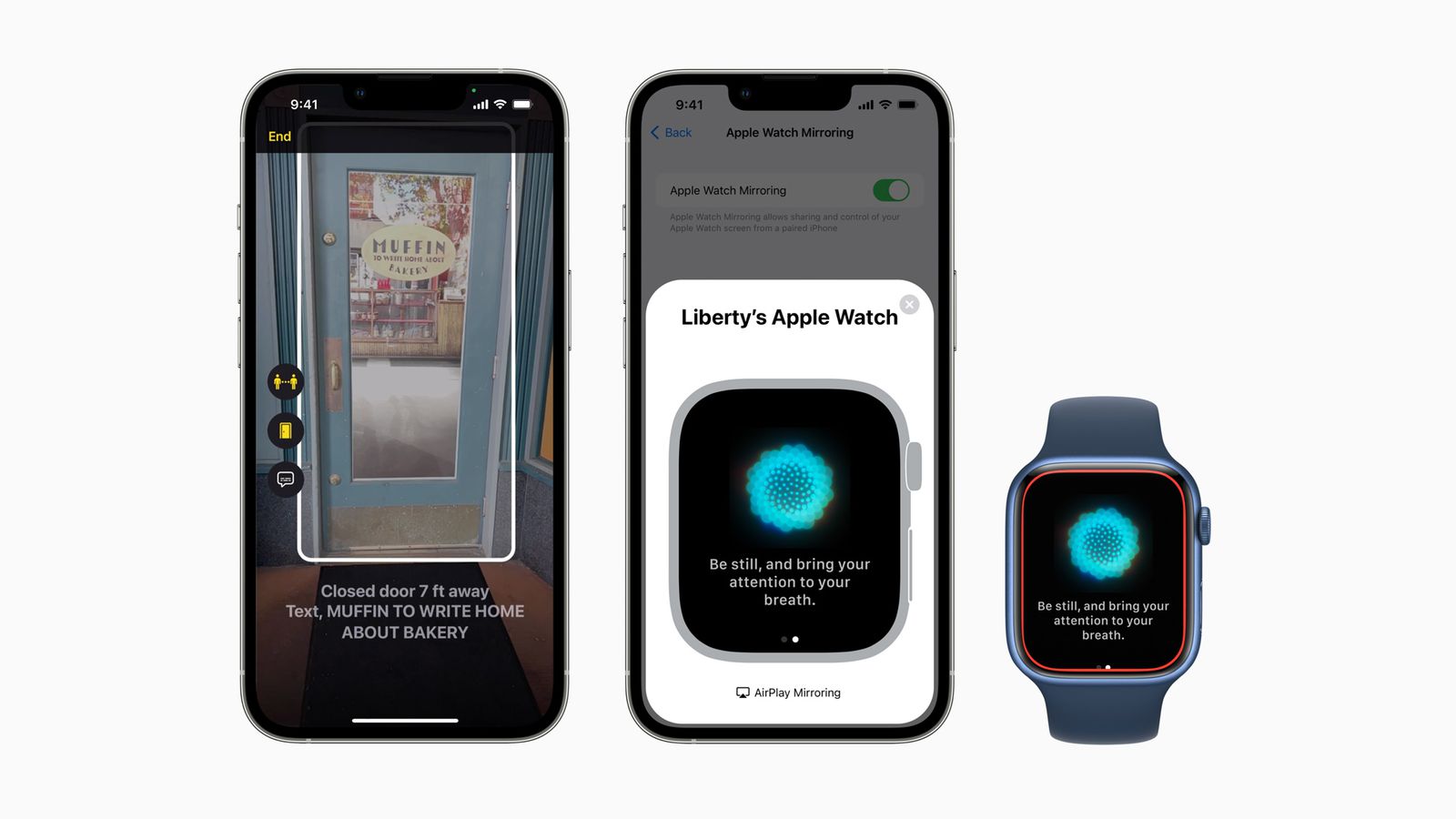

Apple's iPhone 13 and Apple Watch Series 7 already have some very useful accessibility features for people with mobility issues or sensory issues, and they'll be getting even more via free software updates over the next few months. Apple has announced a range of new features including Door Detection, Apple Watch Mirroring and Live Captions.

Here's how Apple describes the new features: "Using advancements across hardware, software, and machine learning, people who are blind or low vision can use their iPhone and iPad to navigate the last few feet to their destination with Door Detection; users with physical and motor disabilities who may rely on assistive features like Voice Control and Switch Control can fully control Apple Watch from their iPhone with Apple Watch Mirroring; and the Deaf and hard of hearing community can follow Live Captions on iPhone, iPad, and Mac."

Door Detection, Live Captions and Watch Mirroring

Door Detection is part of a new detection mode that's coming to the iOS Magnifier alongside People Detection and Image Descriptions, although Door Detection will only work with iPhone models that have a LiDAR Scanner. That's the iPhone 12 Pro and iPhone 12 Pro Max and the iPhone 13 Pro and iPhone 13 Pro Max. We don't know yet if LiDAR is coming to the more affordable iPhone 14 models or if it'll remain a Pro/Pro Max feature. It's designed to help people with low or no vision to locate the doors in new locations, to understand how far they are from it and how it is opened: by pushing, by turning a handle and so on. It can also read signs and symbols around doors, such as room numbers, accessibility logos or warning signs.

Article continues belowWatch Mirroring enables users to control their Apple Watch with their iPhone's assistive features such as Voice Control and Switch Control, voice commands and sound actions, head tracking and external switches. It's designed to make the Apple Watch health features such as blood oxygen sensing, heart rate and mindfulness available to people who can't use the touchscreen and Digital Crown.

Last but not least, Live Captions is coming to iOS, iPadOS and macOS, offering automatic subtitle generation from any audio content – phone and FaceTime calls, videoconferencing apps, streaming media and more. On the Mac you'll also be able to type your response and have it spoken aloud. One of the best features is the automatic attribution in group calls, which makes it clear who's saying what.

For full details of all the accessibility features Apple has planned for this year, check out the Apple Newsroom report here.

Get all the latest news, reviews, deals and buying guides on gorgeous tech, home and active products from the T3 experts

Writer, musician and broadcaster Carrie Marshall has been covering technology since 1998 and is particularly interested in how tech can help us live our best lives. Her CV is a who’s who of magazines, newspapers, websites and radio programmes ranging from T3, Techradar and MacFormat to the BBC, Sunday Post and People’s Friend. Carrie has written more than a dozen books, ghost-wrote two more and co-wrote seven more books and a Radio 2 documentary series; her memoir, Carrie Kills A Man, was shortlisted for the British Book Awards. When she’s not scribbling, Carrie is the singer in Glaswegian rock band Unquiet Mind (unquietmindmusic).