Apple to tackle child abuse by monitoring images on phones and in messages

Apple will put its image analysis to good use in combating child abuse with three new features

Apple is introducing a series of new tools to analyze the photos on a user’s iCloud account for explicit child images, intervene in searches for abusive material and block explicit images from being sent to children.

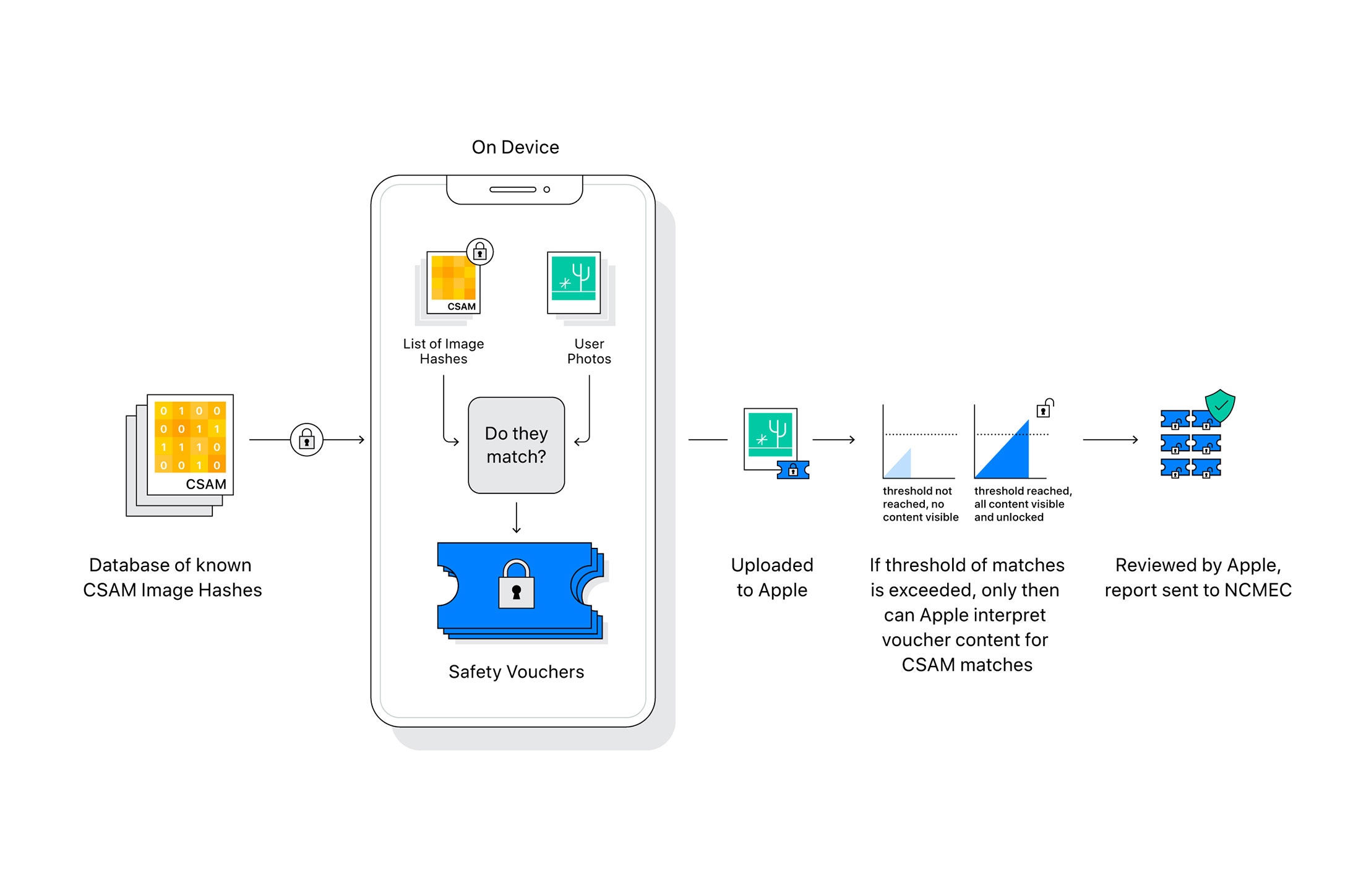

Apple’s image analysis allows users to quickly search through their photos thanks to face detection and recognition tools, while the software can also help improve your pictures by recognizing the type of scene you are photographing. Making use of this technology, Apple will compare iCloud photos to a database of images and if a threshold of matches is found, a report will be sent to the National Center for Missing and Exploited Children (NCMEC).

The system uses cryptography to turn the images into a unique set of numbers, known as a NeutralHash, which can then be compared to the NeutralHash values of images on the Child Sexual Abuse Material database. The system ensures that non-matching images are not shared and according to the white paper, the process has an error rate of less than one in 1 trillion.

Article continues below- I think the iPhone 13 Pro should drop the stainless steel finish and bring in something even better

- Back to school deals 2021: student discounts for college or study

- Apple iOS 15 Beta could auto-remove lens flare to make your photos look better

The two images on the left have the same NeutralHash values despite the color change, while the one on the right has a different value

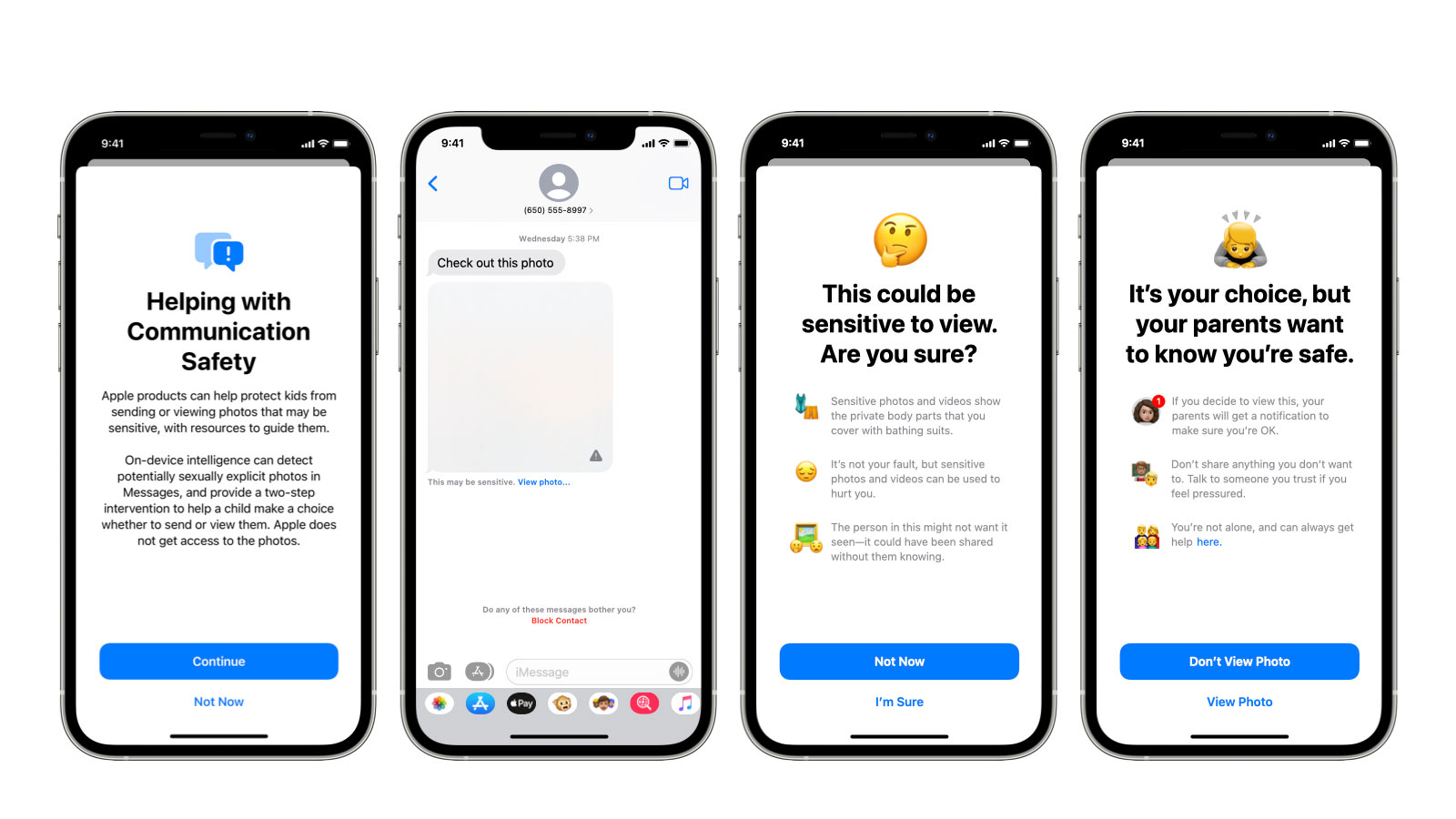

In addition, Siri and search functions will warn users who search for abusive child material, as well as provide resources for users to file reports of suspected abuse. Parents will also be able to activate a feature within Apple Messages that blurs out images sent to their child’s phone that are deemed to be explicit.

Images can still be viewed with a second press but by choosing to view them, the parents will be alerted. There will also be various warnings given on the phone before the image is shown, giving the child a chance to cancel without seeing the picture.

According to Bloomberg, who first published the story, these features are expected to go into use later this year.

The image checking process summarised

Get all the latest news, reviews, deals and buying guides on gorgeous tech, home and active products from the T3 experts

As T3's Editor-in-Chief, Mat Gallagher has his finger on the pulse for the latest advances in technology. He has written about technology since 2003 and after stints in Beijing, Hong Kong and Chicago is now based in the UK. He’s a true lover of gadgets, but especially anything that involves cameras, Apple, electric cars, musical instruments or travel.