Your AirPods could soon see as well as hear, and this is why that's very exciting

Cameras in your ears? Whatever next?

Quick Summary

Researchers at the University of Washington have shown off prototype earbuds with built-in cameras that can work with AI.

The VueBuds capture images that allow the user to ask questions and have responses read to them, live.

Apple could soon be dishing out AirPods that not only hear your surroundings but see them too.

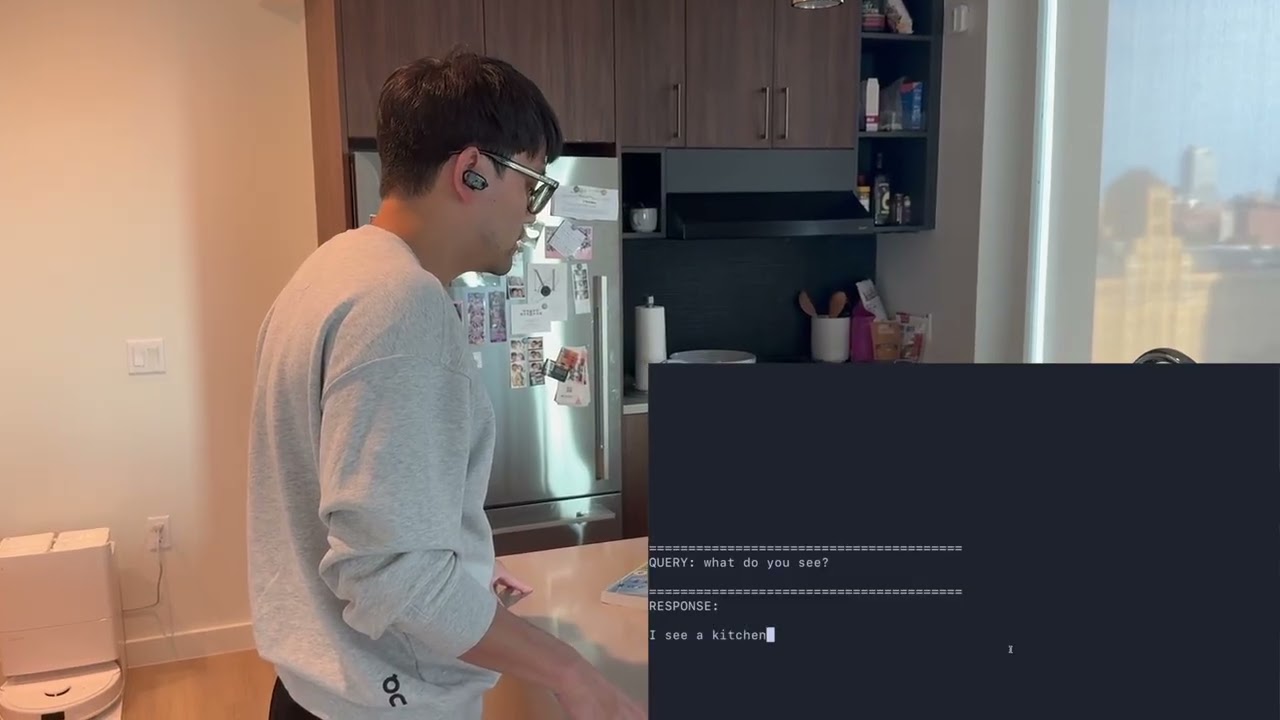

A new paper has been published which explores what it calls VueBuds. Researchers at the University of Washington have shown off the prototype earbuds which come equipped with tiny cameras.

The idea being explored is that AI can be better integrated into daily life - seeing what we see - without the need to hold up a phone or wear AR glasses.

Rumours of Apple working on AirPods with cameras have already started doing the rounds. Could this be the future for Apple AirPods and, indeed, all high-end future earbuds?

How do VueBuds work?

The researchers took a pair of Sony WF-1000XM3 earbuds and fitted cameras to detect surroundings. These were able to see with a useable 98 to 108 degree field of view. By stitching the two images together it made for a mere one-second response time.

The processing can be kept on the connected device with the option to delete images - which can be taken with a recording light - immediately.

The cameras are roughly the size of a grain of rice and use very low-power to get a low-res greyscale image. This not only makes for a minimal battery drain but also keeps data compact for transmission over Bluetooth.

Get all the latest news, reviews, deals and buying guides on gorgeous tech, home and active products from the T3 experts

The researchers said that since AR and VR haven't seen mainstream uptake, this offers another way to add visual smarts to AI assistants, hands-free. It also helps to address privacy concerns with the local processing.

The Apple rumours centre on the company exploring the use of infrared cameras in AirPods, to enhance spatial awareness in a way that could help unlock more useful AI features.

In one example, given by the researchers, a person could look at a food label in another language and have it read out in their ears using their native tongue.

Luke is a freelance writer for T3 with over two decades of experience covering tech, science and health. Among many things, Luke writes about health tech, software and apps, VPNs, TV, audio, smart home, antivirus, broadband, smartphones and cars. In his free time, Luke climbs mountains, swims outside and contorts his body into silly positions while breathing as calmly as possible.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.